PartyLoud - A Simple Tool To Generate Fake Web Browsing And Mitigate Tracking

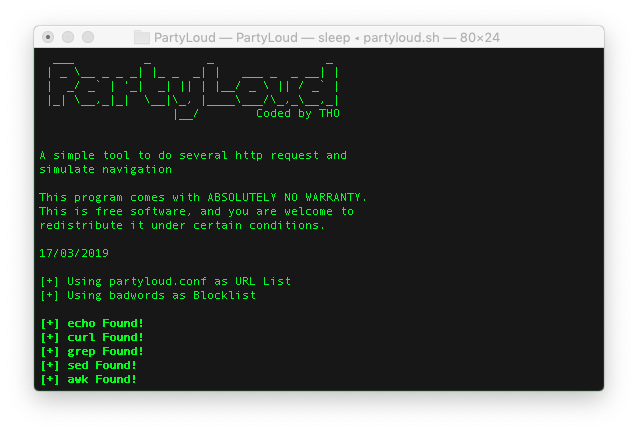

PartyLoud is a highly configurable and straightforward free tool that helps you prevent tracking directly from your linux terminal, no special skills required. Once started, you can forget it is running. It provides several flags; each flag lets you customize your experience and change PartyLoud behaviour according to your needs.

- Simple. 3 files only, no installation required, just clone this repo an you're ready to go.

- Powerful. Thread-based navigation.

- Stealthy. Optimized to emulate user navigation.

- Portable. You can use this script on every unix-based OS.

This project was inspired by noisy.py

How It Works

- URLs and keywords are loaded (either from partyloud.conf and badwords or from user-defined files)

- If proxy flag has been used, proxy config will be tested

- For each URL in ULR-list a thread is started, each thread as an user agent associated

- Each thread will start by sending an HTTP request to the given URL

- The response if filtered using the keywords in order to prevent 404s and malformed URLs

- A new URL is choosen from the list generated after filering

- Current thread sleeps for a random time

- Actions from 4 to 7 are repeated using the new URL until user send kill signal (CTRL-C or enter key)

Features

- Configurable urls list and blocklist

- Random DNS Mode : each request is done on a different DNS Server

- Multi-threaded request engine (# of thread are equal to # of urls in partyloud.conf)

- Error recovery mechanism to protect Engines from failures

- Spoofed User Agent prevent from fingerprinting (each engine has a different user agent)

- Dynamic UI

Setup

Clone the repository:

git clone https://github.com/realtho/PartyLoud.gitNavigate to the directory and make the script executable:

cd PartyLoud

chmod +x partyloud.shRun 'partyloud':

./partyloud.shUsage

Usage: ./partyloud.sh [options...]

-d --dns <file> DNS Servers are sourced from specified FILE,

each request will use a different DNS Server

in the list

!!WARNING THIS FEATURE IS EXPERIMENTAL!!

!!PLEASE LET ME KNOW ISSUES ON GITHUB !!

-l --url-list <file> read URL list from specified FILE

-b --blocklist <file> read blocklist from specified FILE

-p --http-proxy <http://ip:port> set a HTTP proxy

-s --https-proxy <https://ip:port> set a HTTPS proxy

-n --no-wait disable wait between one request and an other

-h --help dispaly this helpTo stop the script press either enter or CRTL-C

File Specifications

In current release there is no input-validation on files.

If you find bugs or have suggestions on how to improve this features please help me by opening issues on GitHub

Intro

If you don’t have special needs , default config files are just fine to get you started.

Default files are located in:

Please note that file name and extension are not important, just content of files matter

badwords - Keywords-based blocklist

badwords is a keywords-based blocklist used to filter non-HTML content, images, document and so on.

The default config as been created after several weeks of testing. If you really think you need a custom blocklist, my suggestion is to start by copy and modifying default config according to your needs.

Here are some hints on how to create a great blocklist file:

| DO ✅ | DONT |

|---|---|

| Use only ASCII chars | Define one-site-only rules |

| Try to keep the rules as general as possible | Define case-sensitive rules |

| Prefer relative path | Place more than one rule per line |

partyloud.conf - ULR List

partyloud.conf is a ULR List used as starting point for fake navigation generators.

The goal here is to create a good list of sites containing a lot of URLs.

Aside suggesting you not to use google, youtube and social networks related links, I've really no hints for you.

Note #1 - To work properly the URLs must be well-formed

Note #2 - Even if the file contains 1000 lines only 10 are used (first 10, working on randomness)

Note #3 - Only one URL per line is allowed

DNSList - DNS List

DNSList is a List of DNS used as argument for random DNS feature. Random DNS is not enable by default, so the “default file” is really just a guide line and a test used while developing the function to se if everything was working as expected.

The only suggestion here is to add as much address as possible to increase randomness.

Note #1 - Only one address per line is allowed

Reviewed by Zion3R

on

8:30 AM

Rating:

Reviewed by Zion3R

on

8:30 AM

Rating: